Beyond the Baseline: Ambing Your Workforce, Not Replacing It

Most enterprises are currently caught in a "Race to the Bottom"—using off-the-shelf AI to become average at the…

The modern enterprise is currently gripped by a specific kind of organizational panic. Following the rapid ascent of generative AI, leadership teams are facing deafening pressure from boards and competitors…

The modern enterprise is currently gripped by a specific kind of organizational panic. Following the rapid ascent of generative AI, leadership teams are facing deafening pressure from boards and competitors to implement automation strategies overnight. This hysteria is fueled by landmark data, such as the June 2023 McKinsey study, which indicates that approximately 62% of business tasks and 44% of management tasks could potentially be automated with existing technology.

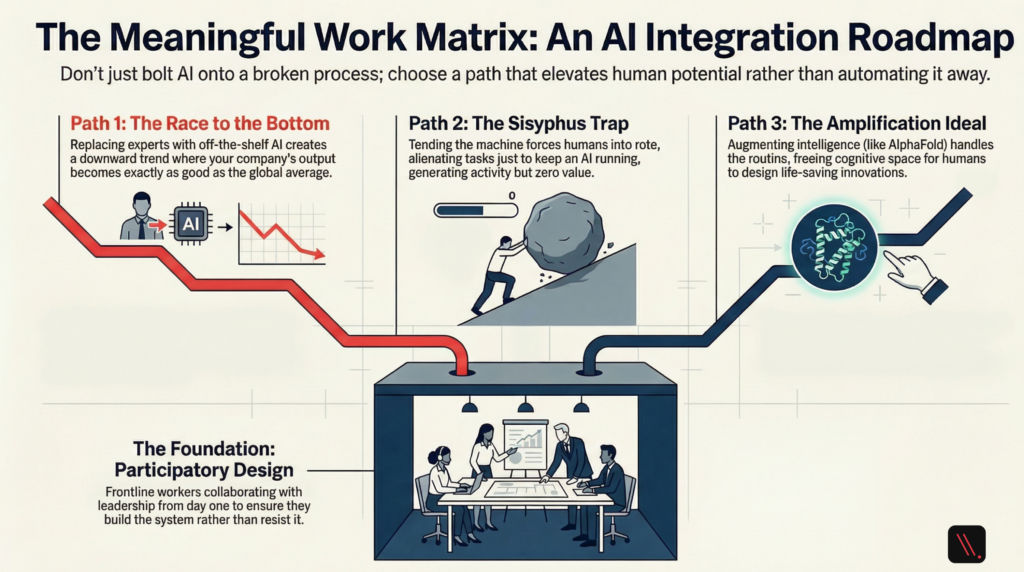

When leaders see these numbers, they don’t see a complex human integration challenge; they see dollar signs and immediate margin expansion. This leads directly into the “Race to the Bottom”—the strategic trap of replacing human experts with off-the-shelf AI to reach an automated baseline. If you use the same tools as your competitors to automate the same tasks, your company’s output inevitably becomes the “global average.” You are becoming average at the speed of light, and your unique competitive advantage is erased.

This “Intentional AI” manifesto argues for a different path. The future of technology must be designed by intent, not by accident. We must move beyond the allure of a cheaper automated baseline and focus on a strategy that amplifies human intelligence rather than commoditizing it.\

Applying exponential technology to broken business processes is a recipe for system disintegration. Imagine you operate a logistics company using a creaky, wooden, horse-drawn carriage. Your board demands more velocity, so you spend millions on a state-of-the-art, aerospace-grade jet engine.

Instead of reinforcing the chassis or upgrading the wheels, you simply bolt that jet engine onto the back of the carriage, strap in, and fire it up. The moment the engine reaches thrust, the wooden wheels splinter into a thousand pieces, the chassis snaps in half, and the horse bolts into a ditch. You are left standing amidst the burning wreckage, blaming the engine for a failure of structural design.

This “bolt-on” mentality leads to two chronic organizational illnesses:

“Pilotitis” The habit of running continuous, isolated AI experiments that flourish in controlled environments but fail to scale because they aren’t integrated into the operational nervous system. Symptoms include:

The Vanity Metrics Trap Many organizations obsess over “exhaust fumes”—activity dressed up as achievement. Measuring how many prompts were sent or how many employees logged into a chatbot is a comforting lie. Activity is not value creation. Like Sisyphus pushing his boulder, high activity levels generate zero progress if they do not result in a net positive ROI.

Before deploying AI, an organization must decide which path it is taking. The “Intentional Enterprise” chooses based on human task integrity:

To move from accidental deployment to strategic mastery, we must adhere to four foundational pillars:

DeepMind’s AlphaFold serves as the pinnacle of “Path Three: Amplifying.” By predicting the 3D structure of proteins, the AI solved a 50-year-old biological challenge. It did not replace biologists; instead, it compressed five years of manual research into minutes. This did not leave scientists idle. It freed them to focus on the higher-level cognitive tasks of designing life-saving drugs and plastic-consuming enzymes. It elevated their ceiling, allowing them to solve problems that were previously unreachable.

For AI to be a partner, it must possess “Explicability.” We cannot rely on “black boxes” that offer no insight into their reasoning. This is where Explainable AI (XAI) tools like LIME and SHAP become vital.

SHAP (Shapley Additive Explanations) is rooted in Cooperative Game Theory, specifically the work of Nobel laureate Lloyd Shapley. To understand SHAP, imagine a basketball team. To determine a single player’s value, you calculate their marginal contribution by simulating the game with every possible combination of players.

SHAP does this with data features. In a mortgage application, it treats debt-to-income ratios and credit scores like players, calculating exactly how much each “player” contributed to a “deny” decision. This creates “Cognitive Fit,” translating mathematical soup into a narrative. This allows a manager to use their autonomy to override a decision—for example, if they know an applicant is a recent immigrant with a thin but healthy credit file—ensuring the human remains in the driver’s seat.

The corporate promise of “reskilling” is often a hollow myth. Telling a 50-year-old supply chain manager their job is gone but they can “reskill” into a prompt engineer is a fantasy, not a strategy.

Genuine inclusion requires Participatory Design: bringing frontline workers into the process before a single line of code is written. When employees help map edge cases and train models, the psychological shift moves from resistance to ownership. When people help build the house, they don’t try to burn it down. Involving domain experts isn’t just ethical; it increases ROI by ensuring the tool actually works in the messy reality of the field.

The “Intentional Enterprise” is a bespoke intelligence system. Because it is deeply integrated into your specific data and shaped by your specific culture, it cannot be replicated by a competitor simply buying the same software. They can buy the algorithm, but they cannot buy the years of intentional integration you have built.

Achieving this requires the “courage of the contrarian”—the willingness to pause and pour a meticulous concrete foundation while your competitors are frantically throwing up cheap drywall. It is the courage to move slowly now so that you can move exponentially faster and safer later.

The call to action is absolute: Use AI intentionally, or not at all.

Finally, I leave you with the Ultimate Question, the one that usually arrives at 2:00 AM when the hype fades: If an AI woke up tomorrow and perfectly replicated your primary technical skill—if your technical output were commoditized to zero—what uniquely human value would you still bring to the table?

Your intuition, your empathy, your moral compass, and your ability to build trust are the only assets that cannot be commoditized. That gap is where the work of intention must begin.